Software is moving towards microservices at full speed. Talking to enterprises in different verticals, industries, and sizes, it’s clear for us at Tetrate that the infrastructure of the future is highly modular, distributed, secure, manageable, and agnostic to lower layers of the stack.

Service mesh offers multiple benefits to microservice architectures with little to no application modifications. In other words: by simply onboarding microservices to the mesh, they instantly become secure, observable, and manageable modules.

The question is how to get there when your current revenue is generated by the previous generation of the applications that run on VMs. A common modernization approach is to develop a new, cloud-native version of the application that is more modular, and to start gradually moving traffic to the modern application while a significant portion is still served by the stable, old-school monolith. This transition may take an extended period of time––anywhere from weeks to years –– until everyone is confident that the monolith can be safely decommissioned.

Integrating VMs into the mesh

The solution to this problem is to integrate VMs into the mesh as well. While microservices running in Kubernetes can be natively added to a service mesh, enterprises need a solution that incorporates non-Kubernetes workloads like virtual machines That’s why Tetrate has been at the vanguard of adding VM support to Istio and to our own flagship product, Tetrate Service Bridge.

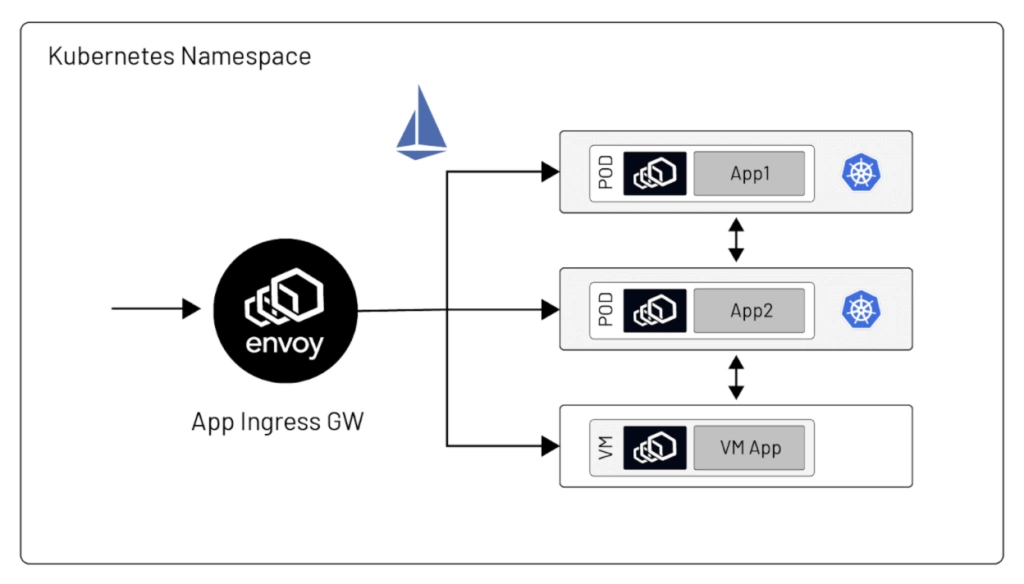

Consider microservices today that run on Kubernetes. An important concept in Kubernetes is the namespace that defines logical boundaries of isolation.In Kubernetes, pods in a namespace can be configured together as a discrete set.

In the Kubernetes model, individual application instances are grouped into a service — a single, logical unit consumable by other applications.

Any VM added to the service mesh must be assigned to a Kubernetes namespace. Be sure it’s only one Kubernetes namespace– it can’t belong to multiple namespaces, as such configuration would cause a collapse of settings coming from different namespaces.

With increasing support for VMs, Istio complements the Kubernetes model by allowing its services to group together not only pods but also VMs (represented by an Istio WorkloadEntry resource). Similarly to Kubernetes Pods and Services, anIstio WorkloadEntry exists within the isolation boundaries of a Kubernetes namespace. That is why, effectively, each VM must be associated with a Kubernetes namespace.

The Istio components controlling all aspects of the service mesh are located in the istio-system namespace of the Kubernetes cluster. This means that VMs need to have network connectivity to istiod and a few other components in istio-system namespace. It’s usually not an issue for microservices as network connectivity between Kubernetes services inside of the cluster is a much more natural item to configure. However, in the case of virtual machines, basic routing and firewall rules between the VM and the Kubernetes cluster running the mesh must be in place to get a VM successfully onboarded.

Enterprise cases

When we talk to enterprises, there are many “typical” scenarios that seem obvious at first glance, but in the finer technical details, the implementations vary significantly. Here are just a few examples:

- A typical use-case will be a Kubernetes service calling a service in a VM or vice-versa. What happens when there are two (or thousands) of VM instances in the service mesh calling each other? Will they behave in the same way as the native Kubernetes services (or your original setup in the lab) or will a more complex, scaled up deployment behave differently?

- A Kubernetes pod typically represents an instance of a single identifiable microservice. However, it’s common to have multiple applications or services hosted on a single VM. In this case the challenge is how to treat the multiple applications in the VM separately in terms of traffic metrics, traffic flow and security identity.

- Consider a typical public cloud configuration where a background service is auto-scalable –– i.e. during peak hours, the number of VM instances scales out to hundreds or even thousands of instances, then during off-peak hours, the number of VM instances is scaled back for cost efficiency.and to make this world a better place. Adding those VMs to the mesh becomes a very dynamic task that needs to be automated. The automation is required for both situations– adding VMs to the mesh and cleaning up the mesh when instances are removed.

This is just a small sample of the many different configurations that exist today. Also, combinations of configurations add even more complexity that every organization needs to understand before jumping to the implementation stage.

Tetrate Service Bridge

We built the Tetrate Service Bridge (TSB) to handle this complexity. TSB is an enterprise-grade application connectivity platform that manages the connectivity, security, observability, and resiliency of applications running in multiple clusters, on public and hybrid clouds, and on any compute. One of the benefits of TSB is to streamline the process of onboarding VMs by automating the bootstrapping process, decreasing manual work and the probability of a human error. Also, all metrics, traces, and logs are collected, processed and aggregated in the same dashboard across multiple Kubernetes clusters and VM workloads. TSB provides granular permission control for end-users and an extended graphical dashboard based on Apache SkyWalking that visualizes traffic communication in the service mesh for both Kubernetes workloads and onboarded VMs.

VM onboarding requires configuring the following three manifests:

- A WorkloadEntry that defines:

- properties of a workload (application) running on a VM such as labels and ports

- properties of the VM itself such as network and IP addresses

- the mesh identity of the workload running on a VM such as service account

See the Istio documentation for more details on WorkloadEntry.

- A Sidecar (not to be confused with a sidecar container injected into a Kubernetes pod) — An Istio Sidecar defines the configuration of the sidecar proxy that will be deployed on a VM such as VM listening addresses, listening ports, and other services that workloads (applications) running on the VM need to make outgoing requests to.

- A ServiceEntry. This is similar to the Kubernetes Service concept, but specifically for pointing to non-pod endpoints. In general, Istio supports Kubernetes Services and Istio ServiceEntries interchangeably. Both Kubernetes Services and Istio ServiceEntries can group together both Kubernetes Pods and VMs (Istio WorkloadEntries). See the Istio ServiceEntry documentation for more details.

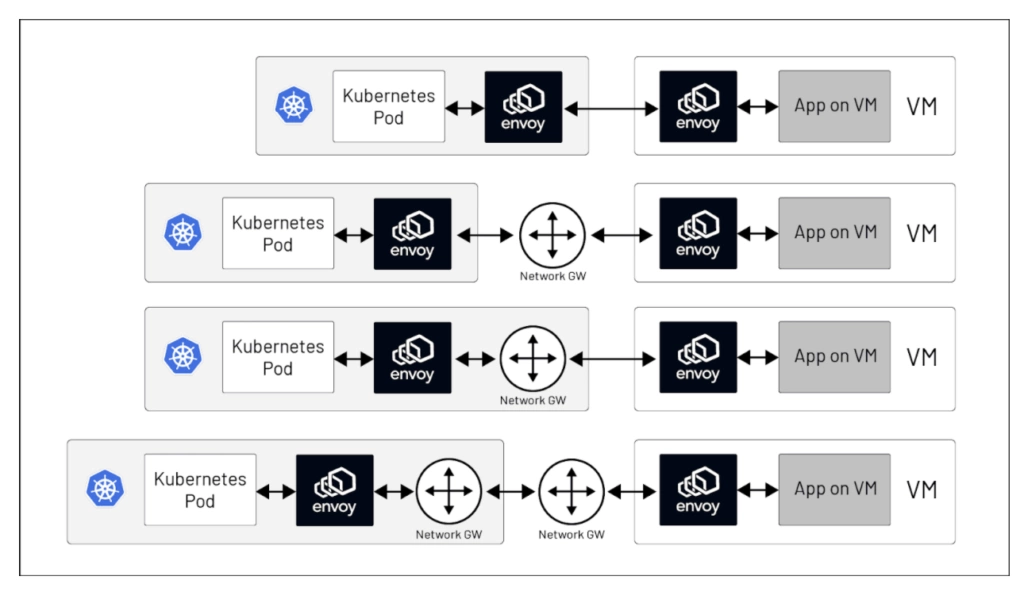

Istio supports a multi-network deployment model where Pods/VMs in one network have no direct connectivity to Pods/VMs in another. This is achieved via the meshNetwork configuration where all endpoints (VMs and Pods) can have a network defined for them.

To make cross-network traffic possible, the Istio model has the concept of a network gateway. If a network has a network gateway associated with it, then requests from Pods/VMs in other networks to Pods/VMs in that network will be tunnelled through that gateway.

TSB makes meshNetwork configuration transparent to the end-user and the calls are dispatched the way they need to be dispatched — without the need to spend a lot of time on this topic.

Parting thoughts

To conclude we’d like to invite you to see TSB’s streamlined VM onboarding in action using the `tctl` (Tetrates CLI tool). Additionally you can see the VM’s detailed connectivity in the TSB UI.

As a final note – a huge thank you goes to Tetrate’s customers providing their valuable feedback and spending hours working to hone the design of this seamless solution. Also, thanks to Tetrate engineering for the very thorough examination of the real-world scenarios and for implementing an elegant and user-friendly approach. Also we would not be here without Istio and Envoy communities who drive innovation and spread the great vision of the technological revolution into every corner of the world!

Further reading

This article is a summary of a recent talk at ServiceMeshCon.

###

If you’re new to service mesh, Tetrate has a bunch of free online courses available at Tetrate Academy that will quickly get you up to speed with Istio and Envoy.

Are you using Kubernetes? Tetrate Enterprise Gateway for Envoy (TEG) is the easiest way to get started with Envoy Gateway for production use cases. Get the power of Envoy Proxy in an easy-to-consume package managed by the Kubernetes Gateway API. Learn more ›

Getting started with Istio? If you’re looking for the surest way to get to production with Istio, check out Tetrate Istio Subscription. Tetrate Istio Subscription has everything you need to run Istio and Envoy in highly regulated and mission-critical production environments. It includes Tetrate Istio Distro, a 100% upstream distribution of Istio and Envoy that is FIPS-verified and FedRAMP ready. For teams requiring open source Istio and Envoy without proprietary vendor dependencies, Tetrate offers the ONLY 100% upstream Istio enterprise support offering.

Get a Demo