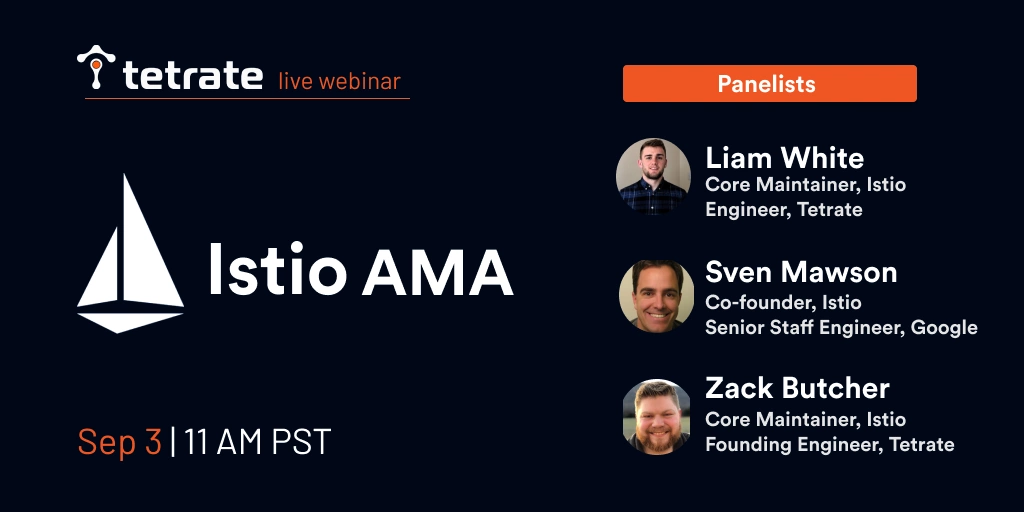

Istio founders and contributors Zack Butcher, Sven Mawson, and Liam White discussed all things Istio– covering the latest Istio 1.7 release, what’s to come in 1.8, and practical advice for end users of Istio and the Envoy proxy in Tetrate’s September Istio AMA session.

Istio is the de facto standard open source service mesh that decouples networking and securing from applications and allows organizations to observe, secure, and manage their services by controlling their communication.

Here’s a roundup of 10 major takeaways:

1. If you’re curious about Istio performance, you can check out the latest performance testing results as well as Karl Stoney’s recent Twitter post on cost saving with Istio.

2. External Istiod, (formerly known as Central Istiod) is an alpha feature in 1.7 developed by IBM to give users the ability to configure Istio so that all pods in an infrastructure can be controlled from a single control plane. But it may still be advisable to have one control plane per cluster to avoid a single failure domain.

3. What’s the biggest Istio production cluster that’s been deployed? Non-disclosure agreements prevent some specificity here, but many thousands of nodes have been run in a single cluster. Tens of thousands of pods have been used effectively in a single mesh, and have been multi-tenant in the sense that multiple teams or lines of business with different namespaces are using them in a single company. For an example of especially large-scale deployment, check out this talk from Suresh Visvanathan and Mrunmayi Dhume on running Istio and Kubernetes on-prem at Yahoo scale.

4. If you have an Envoy sidecar shutting down while the container still has open network connections, you can look at a config in the API that tells Envoy to drain connections for a certain amount of time. This config defines the time period in which Envoy will not accept new connections, but will allow existing connections to finish up within a set time. Zack Butcher: A rule of thumb that would be safe would be to have the grace period be at least 1.5-2x longer than your Connection TTL.

5. There are projects coming to Istio 1.8 (expected in late 2020) that will make multi-cloud architecture a lot simpler and easier. For now, it comes down to how you want to set up your overall network topology across two clouds, says Zack Butcher. Look at Istio’s installation guides to check out the two basic topology patterns you can choose from. Generally speaking, assuming that you have disparate L3 networks for each cloud, we would recommend you set up the replicated control plane topology, which would force traffic between your clusters through ingresses. Then you expose those ingresses based on the network topology that you want to build. You can use a VPN across your two clouds, and as long as those ingress IP addresses for your Kubernetes clusters are exposed over that VPN connection, it’ll flow normally.

Istio will work atop the L3 network that you give it, and it comes down to how you configure it. In general, the easiest way to do that configuration is to publish the ingresses of a remote cluster, plus the set of services that are behind that ingress. “The general philosophy is the same as when we build an IP network,” says Zack Butcher, which is “know where your local things are, and then know where the gateway is for the next hop.”

6. When using the egress gateway in Istio for passing through TCP traffic for different external endpoints, different ports need to be opened on egress gateway. Is there any other way so that different endpoints can be served from the same port on egress gateway?

There is if you can control server name indication (SNI) in your application. Effectively, this boils down to how Istio identifies traffic that’s coming in. Generally, for TCP traffic, we only have the IP address and the port. So separate ports help us easily identify separate external services. If you set SNI, you can get a name you can back into service. But it’s not easy to do today.

For egress gateway, you can have a pass-through cluster. That’s an install-time configuration. There’s a global config that says “allow unknown” or not, and if you allow unknown, that will work at the egress.

7. Istio’s recent 1.6 and 1.7 releases lay the foundations for extending service mesh to VMs. Tetrate, which was founded to bring service mesh to any workload, is a driving force behind these updates. Making onboarding even smoother and simpler will be a big focus of 1.8, which is expected at the end of 2020.

Other focuses will be the ingress gateway and multi-cluster, where we’re working on new terminology, a new installation guide, and a more usable, stable External Istiod (previously called Central Istiod). Technical Oversight Committee (TOC) meetings happen every Friday at 10 a.m., and they’re open to the public. Visit www.github.com/istio/community for access to recorded meetings and docs.

8. The best way to secure traffic from pod to Envoy is to use a domain socket. Zack Butcher: “Domain sockets are cool! You should use it.”

9. Want to manage a database inside a cluster? Go for it. Do you want to use a managed service? Use that! Whatever you pick, Istio will be able to work. If you deploy RDS inside your Istio cluster, that’s fine, you can even put sidecars on your RDS– with some tradeoffs.

10. Building a DNS resolver into every Envoy sidecar has been a consideration for VM integration specifically, but one of the big problems has been the OS image and version you’re using, so there’s no one size fits all for setting up DNS to route correctly to other services, says Istio founder Sven Mawson. Istio is using the KubeDNS as the DNS resolver for the mesh, which VMs don’t natively integrate with and can be a painful process. He explains that if your Envoy is intercepting DNS queries to check if it’s for something in the mesh and returning an IP address, then none of that setup is required. You can drop it onto the VM, and it works. The aim is to make it easier and more seamless.

Istio does not do DNS, so in Kubernetes, this is fine. To solve the naming issues of multi-clusters, Istio is working with the Kubernetes community to work on a resolution that will eventually remove the need for .global entirely (also, keep an eye out for .global’s rename to .clusterset.local!). If you want to register a name and a namespace that Kubernetes doesn’t know about, then Kubernetes has particular naming conventions. If you wanted to add another name or namespace then you would have to deal with the DNS yourself– which a lot of users do– by using their own DNS as opposed to using KubeDNS.

Istio contributor Zack Butcher adds that you only need DNS if your application needs to resolve DNS– if it doesn’t, then there is a reasonable way to bypass DNS requirement by delegating all service discovery to Istio. Using TCP as opposed to HTTP? You’ll need separate sockets.

Stay on top of Istio

For more of this, check out the entire #TetrateAMA YouTube playlist and subscribe to Tetrate’s channel. Follow @tetrateio or contact us to hear about upcoming AMAs related to service mesh and open source.

This blog is a synopsis of the #TetrateAMA session featuring Sven Mawson of Google and Tetrate’s Zack Butcher and Liam White.

Tetrate content writer Tevah Platt compiled and edited this blog content. The session was moderated by Tia Louden with video editing by Aswin Behera.

###

If you’re new to service mesh, Tetrate has a bunch of free online courses available at Tetrate Academy that will quickly get you up to speed with Istio and Envoy.

Are you using Kubernetes? Tetrate Enterprise Gateway for Envoy (TEG) is the easiest way to get started with Envoy Gateway for production use cases. Get the power of Envoy Proxy in an easy-to-consume package managed by the Kubernetes Gateway API. Learn more ›

Getting started with Istio? If you’re looking for the surest way to get to production with Istio, check out Tetrate Istio Subscription. Tetrate Istio Subscription has everything you need to run Istio and Envoy in highly regulated and mission-critical production environments. It includes Tetrate Istio Distro, a 100% upstream distribution of Istio and Envoy that is FIPS-verified and FedRAMP ready. For teams requiring open source Istio and Envoy without proprietary vendor dependencies, Tetrate offers the ONLY 100% upstream Istio enterprise support offering.

Get a Demo